Vibe Coding Grew Up. Now It’s Designing.

Google Stich: What happens when the interface is just... describing what you want

Google’s Stitch is worth paying attention to, even if you’re not a designer.

Launched at Google I/O in May 2025 and now significantly redesigned, Stitch bills itself as an “AI-native infinite canvas” for UI design. The new version introduces what Google is calling “vibe design”: instead of starting with wireframes or pixel-level specs, you start by describing what you want the product to feel like. Your business objective. The emotion you want the user to have. What’s currently inspiring you. The AI handles iteration from there.

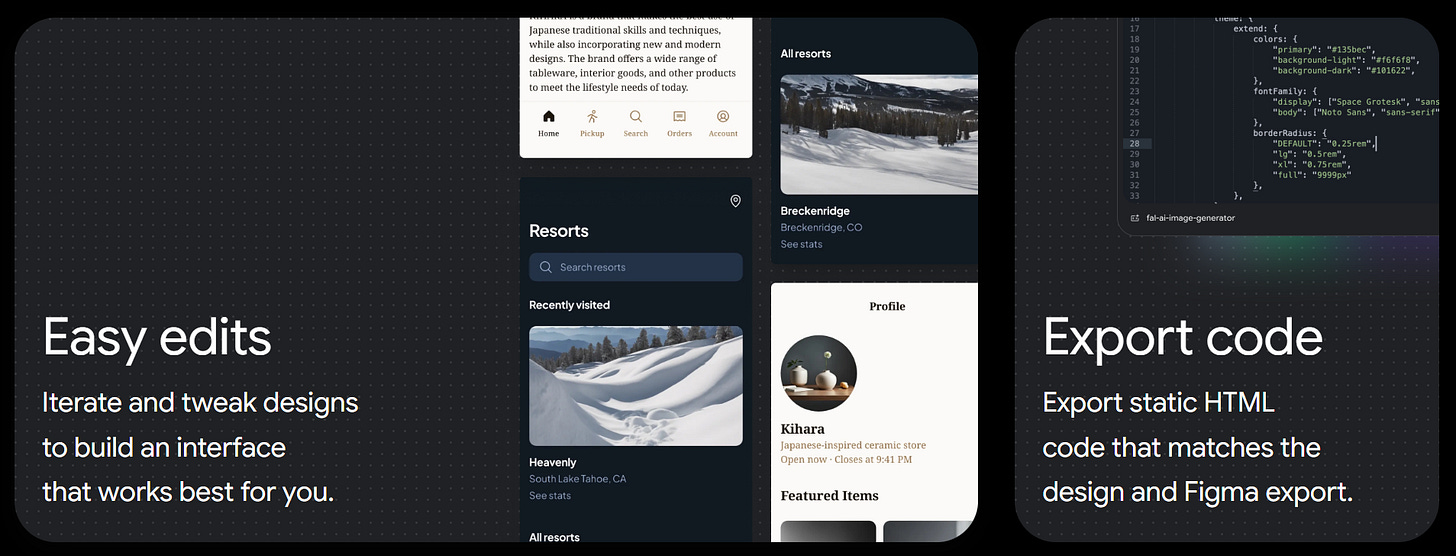

You can now speak directly to the canvas, ask for real-time design critiques, request variations by voice, and generate interactive prototypes without touching a single tool panel. Stitch will even auto-generate the next logical screens based on user flows you didn’t explicitly specify.

There are rough edges. This is still a Google Labs experiment. But Figma’s stock dropped when the update landed. That tells you something about how the market reads the stakes.

What’s actually happening here

Vibe coding was about abstracting away technical execution. You describe the outcome you want; the model writes the code. Stitch does something adjacent but distinct: it’s abstracting away design literacy, or the gap between having a clear idea and being able to express it visually.

That gap has always been costly. The person with the sharpest instinct for what a product should feel like is often not the person trained to render it. Founders, strategists, product managers, researchers: these are people who understand the intended experience but lack the vocabulary to express it in Figma. Stitch appears designed specifically for them, not as a shortcut for designers, but as an on-ramp for people who have been locked out of the design conversation entirely.

This is a meaningful shift. Not because it makes design “easier” in some trivial sense, but because it changes who gets to participate in shaping what a product looks and feels like before anyone writes a line of production code.

The broader pattern

The bet most AI labs made in 2024 was that software development would be the first domain where AI created real capability leverage. That bet looks correct. But coding was always the most legible example of a broader dynamic: AI peeling away the technical skill layers that have historically separated someone with a clear idea from someone who can execute it.

Design is now following. Writing is arguably already there, though the quality questions are still live. Strategy and analysis are further behind, but the direction is consistent.

One analyst noted that without deterministic constraints — design standards, corporate patterns, contextual requirements — tools like Stitch carry real risk of producing outputs that are technically polished but strategically incoherent. That’s a fair concern. The question isn’t whether the canvas can produce something beautiful. It’s whether the person guiding it knows what they actually want.

That, as it turns out, is the part that was always the hard part.

Stitch is currently free in Google Labs beta. Worth trying even if design isn’t your domain — the experience of describing intent and watching a canvas respond is clarifying in ways that are difficult to anticipate until you do it.

Sources: Google Blog | The Register | The Deep View | AI Business