Big Tech Bets: The Competitor No One Puts in the Pitch Deck

On the real competitive question facing AI startups and the companies deciding whether to buy from them

From my series Operating Conditions: on the strategic landscape leaders are navigating. If you’re building an AI startup right now (or considering contracting with one), you already know the obvious risk: execution. What’s harder to see clearly is the structural one. This is an attempt to name it plainly, from someone who has watched it play out from a few different angles.

Every AI startup getting funded right now is making a bet, whether the pitch deck says so or not. VC investments typically take five to eight years to exit. That means your product needs to still matter in a world where Anthropic, OpenAI, and Google have had five to eight more years to build. You’re not only betting on your own execution. You’re betting against theirs1.

Ethan Mollick put this cleanly in a recent post: almost every AI VC investment is essentially a bet against the vision the big labs have laid out. He’s right. And I’d take it further, because I’m watching this tension from both sides of the table.

I’ve been a founder, and I’ve evaluated vendors for enterprise purchasing decisions. I mentor startups through accelerator programs, and I advise companies on their technology strategy through Bridgecraft. That range of vantage points means I keep seeing the same collision from different angles. On one side: the founding team with a sharp, specific tool, priced well, built for a real workflow. On the other: the enterprise buyer who already has a relationship with AWS or Azure or Oracle whose default move will always be to check what the platform offers before even looking at a startup.

This is the competitive landscape that doesn’t get enough honest discussion in startup spaces. Moats, runway, product-market fit: the standard vocabulary is all still relevant, but it now has a gravitational body that it didn’t before. The big labs aren’t just competitors. They’re the tidal force that shapes the entire market.

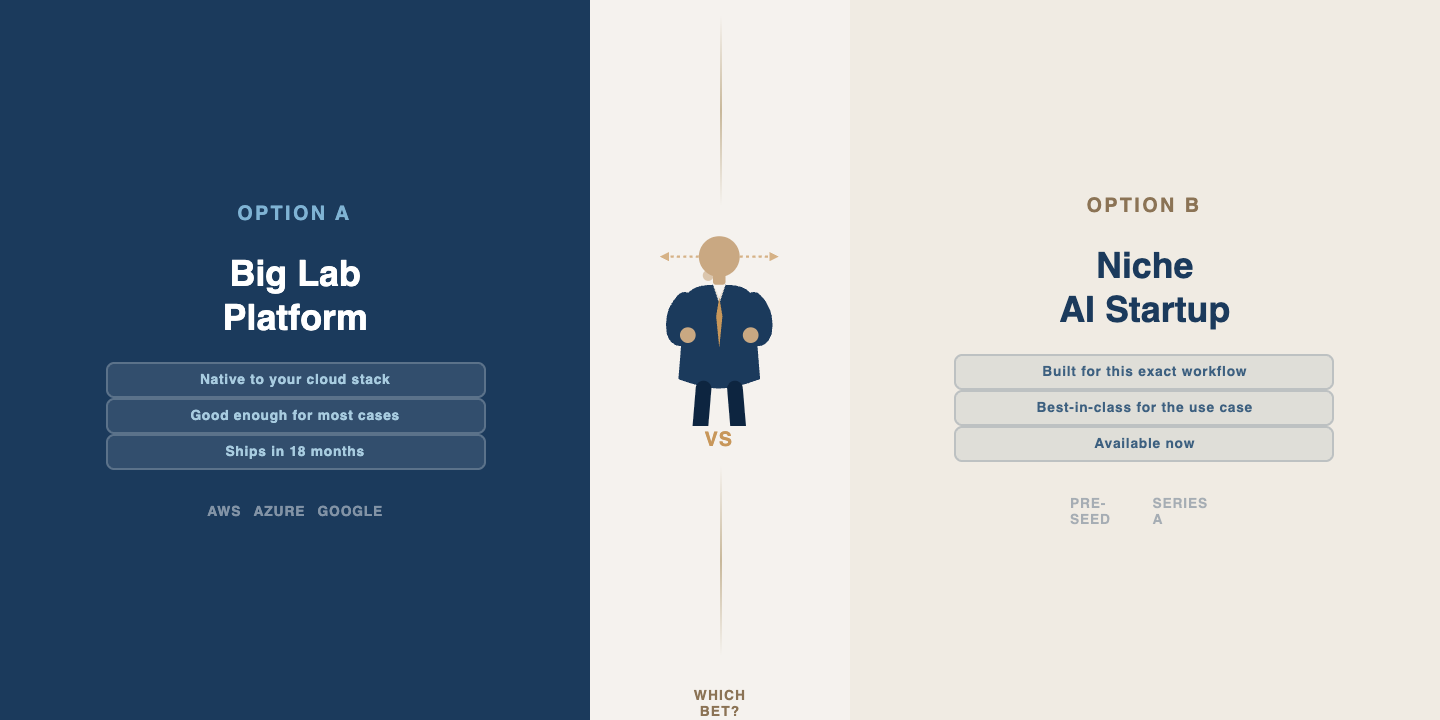

Here’s what I mean concretely. A startup might build a genuinely excellent AI agent for a specific compliance workflow. Solves a real problem, customers love it, price is right. But then AWS announces a general-purpose agent framework at re:Invent, and suddenly every enterprise buyer’s procurement team is asking, “Why don’t we just use the thing that plugs into our existing stack?” The startup’s product might be better. It almost certainly is, for that use case. But “better” is not the only variable in an enterprise purchasing decision, and often it’s not even the most important one.

I’ve seen this inertia from the inside. For over 18 months at one company I worked with, the software team was making the case2 to switch from one familiar vendor to a clearly superior alternative. The c-suite wouldn’t move. The delay held back prototyping and development — and then leadership used that same slowdown as justification for canceling the initiative later. The decision not to decide became the decision. That’s the loop procurement inertia creates, and it’s grimly common.

But that’s a story about inertia within an organization that had already committed to buying something. There’s a separate problem that doesn’t get discussed enough: buyers who haven’t committed to buying anything yet. I ran into a clear version of this in a recent conversation with a compliance director at a major financial exchange. When I asked about their timeline for deploying particular AI agents internally, the answer was revealing: still roadmap-stage. Not deployed. Not even piloting, really. Still in the “we’re evaluating the landscape” phase.

That’s the other side of Mollick’s observation that deserves attention. It’s more than startups are betting against the labs. It’s that the enterprise buyers everyone is building for may not be ready to place their bet yet, either. The market timing question cuts both ways. You can have the right product and still be early in a way that burns through your runway before the buyers show up3.

So what do you do with this?

If you’re a startup founder in AI right now, I think the honest answer is: you need to be very specific about which part of the big labs’ roadmap you think they’ll deprioritize or do badly. Not just “we’re more focused.” That’s table stakes. You need a real theory about why the hyperscaler will leave this gap open long enough for you to build a business in it. You will need to pressure-test that theory with actual buyers, not just other founders.

If you’re a company trying to make AI buying decisions, the honest answer is different but equally uncomfortable. The default will always be to wait for your existing vendor to offer something “good enough.” Sometimes that’s the right call. Sometimes it means you’re buying a mediocre general solution eighteen months from now when you could have had a sharp specific one today. The trick is knowing which situation you’re in, and most organizations don’t have a reliable process for figuring that out.

This is a lot of what I spend my time on at Bridgecraft: helping companies develop a real point of view on their technology landscape, rather than letting vendor relationships and procurement inertia make the decision by default. It’s not a solved problem. But it’s a solvable one, if you’re willing to do the work of actually understanding the terrain instead of just reading the map that your existing vendors hand you4.

The AI investment landscape isn’t going to get less complicated. The bets aren’t going to get easier. But the quality of your orientation to the landscape you’re operating in, and awareness of which paths afford the best chance of reaching desirable destinations? That’s where enduring advantage can be found.

A slight tangent, I recalled just earlier today when Demis Hassabis was speaking at MIT Brains, Minds, Machines 10 year anniversary a few years ago, and what his portrayal of the future research landscape would be. Smaller scale academic labs and companies wouldn’t be able to compete with compute, but could do important things with theory and testing smaller-scale variations. Now, is that higher-risk spaces, by default? The landscape continues to change shape, for what the stable ground versus ‘difficult terrain’ may be.

Impassioned pleas, rigorous cost breakdowns, and everything else.

This is particularly difficult and often leads investors or otherwise contributors to a venture (or even smaller scale project) to look for proxy metrics that may be of arbitrary real value; but they may “feel more comfortable” if you had X number of Z things.

Knowing who to listen to and those people being embedded in the right places is a good start.