Reading Levin’s New Vocabulary Alongside Krakauer’s Complexity: Ingressing Minds and Teleonomic Matter

Part One in a multipart series reconciling Krakauer & Levin's research programs and theoretical compatibility.

Michael Levin has been building a vocabulary. His recent glossary post (Substack, December 2025) and the preprint “Ingressing Minds” lay out a conceptual toolkit that, taken together, amounts to something like a research program disguised as a dictionary.

What I want to do here is walk through a few of these terms, particularly the ones that resonate with (and productively diverge from) David Krakauer’s framing of complexity science as the study of teleonomic matter. Krakauer, as president of the Santa Fe Institute, has been making a related but distinct move: arguing that complexity science’s proper domain is “matter with purpose,” or what he and Christopher Kempes call “problem-solving matter” in their 2024 essay.

Both researchers are circling similar questions. Where they land is instructive for anyone trying to think seriously about agency, intelligence, and what it means to study systems that have agendas.

Recommended listening: if there’s one thing you want to focus on when trying to comprehend Krakauer’s views on complexity, I’d suggest Sean Carroll’s conversation with David Krakauer on Mindscapes.1 It’s a few years old, but is a decent pace of history, context, theory, and useful questions from Carroll.

Agential Material and Teleonomic Matter

Start with Levin’s term agential material. This is the stuff engineers work with that has “a significant degree of autonomy, an agenda, perhaps homeostatic capacity or higher, which it will execute independently.” The degree to which your material is agential, Levin argues, is the degree to which engineering is really reverse-engineering, and the result is a collaboration with the material rather than an imposition on it (Levin, “Darwin’s Agential Materials,” Cell Mol Life Sci, 2023).

Krakauer’s teleonomic matter covers overlapping territory. In his conversation with Sean Carroll, Krakauer defined complexity science’s ontological domain as “matter with purpose,” distinguishing it from the ordinary2 matter studied by physics (Krakauer on Sean Carroll’s Mindscape, Episode 242, July 2023). He locates the origins of this field in the Industrial Revolution, the era when machines (both human-made and evolved) forced a reckoning with purposeful systems. Krakauer writes that complexity science and machine learning both target “teleonomic/purposeful matter,” systems that “encode historical data sets for the purposes of adaptive decision-making” (Krakauer, “Unifying Complexity Science and Machine Learning,” Frontiers in Complex Systems, 2023).

The overlap is real, but the divergence is where things get interesting. Krakauer’s framing stays closer to a computational and information-theoretic register. His teleonomic matter is defined by its capacity to encode, process, and act on information in service of goals. Levin’s agential material pushes further: it emphasizes that the material itself has competencies, that it actively problem-solves in ways that may surprise the engineer, and that the optimal strategy for interacting with it is behavior-shaping intervention rather than micromanagement.3

Levin’s framing also carries an implicit ethical weight4 that Krakauer’s doesn’t quite reach. If the material you work with has an agenda, you are in a relationship with it. A recent preprint Levin co-authored with Richard Watson makes this point explicit:

the inadequacy of the standard machine metaphor5 in biology stems from treating cognition as something that appears above a complexity threshold, rather than as a continuum present at every scale

(Levin & Watson, “Machines All the Way Up and Cognition All the Way Down,” Seminars in Cell & Developmental Biology, 2026).6

The Axis of Persuadability and the Spectrum of Interventions

Levin’s axis of persuadability organizes systems from mechanical clocks to humans along a spectrum defined by what kind of intervention is optimal for prediction and control: rewiring, setpoint editing, training, logical arguments. It’s “an engineering take on the question of agency, designed to bring deep philosophical questions into tight contact with experimental science” (Levin, “Technological Approach to Mind Everywhere (TAME),” Frontiers in Systems Neuroscience, 2022).

Krakauer arrives at a related insight from the complexity side. He argues that the key question is what kind of theory is appropriate for a given system. As he puts it: “Imagine how hard physics would be if particles could think. That is essentially the essence of complexity” (Krakauer, Mindscape 242). Both are saying that the nature of the system should determine the toolkit you bring to it. But Levin makes this operational in a way that Krakauer hasn’t quite matched: the axis of persuadability is a practical guide for experimentalists. It asks, concretely, what kind of approach will persuade this system to do what you want it to do? That question changes the engineer’s posture from one of command to one of negotiation.

Teleophobia: The Cost of Undershooting

Levin’s coinage teleophobia names something that most researchers working at the boundary of biology and philosophy have felt but rarely articulated: “the unwarranted fear of erring on the side of hypothesizing too much agency in explaining or predicting the behavior of a system.” The term identifies an asymmetry in how the field treats errors. Attributing too much agency is seen as a grievous intellectual sin (anthropomorphism, vitalism, softness). Attributing too little is treated as the conservative default, a respectable position. Levin argues this is backwards. The failure to recognize competencies in a system is just as much an error as over-attribution, with real costs for both understanding and engineering7.

Worth noting in that context: this connects to Krakauer’s own argument about the limits of reductionism. In his Frontiers paper, Krakauer invokes Philip Anderson’s “More Is Different” from 1972 to argue that the accumulation of broken symmetries in complex systems means that the laws of physics lose much of their explanatory power at higher scales. The emergentist position, as both Krakauer and Levin have observed, often functions as a placeholder: we say “emergence” and stop asking questions. Levin’s teleophobia names the cultural mechanism that keeps that placeholder in place. It’s the reason researchers reach for mechanical explanations even when the system is demonstrably doing something more interesting.8

The Platonic Space: Where Levin Goes Further

In “Ingressing Minds,” Levin proposes that patterns of form and behavior ingress from a structured, ordered, non-physical Platonic space. This space contains low-agency patterns like facts about triangles and prime numbers, but also higher-agency ones: behavioral competencies, kinds of minds. This is a genuinely radical claim, and Levin knows it. He writes on his blog that the paper “holds the record so far” for the most speculative thing he’s published, while noting that the ideas are “very much in flux.”

The empirical motivation is substantial, though. Levin points to planarian flatworms that regenerate heads of other species without genetic change (by disrupting bioelectric circuits), and to kidney tubule cells that restructure themselves around novel constraints in ways no molecular pathway explicitly encodes. The “Ingressing Minds” preprint sketches a research program built around synthetic biology and bioengineered constructs (Xenobots, Anthrobots, chimeras) as “exploration vehicles” for mapping the structure of this space (Levin, “Ingressing Minds,” PsyArXiv, 2025).

Krakauer may resist this framing.9 His take on complexity science stays within the materialist paradigm, even as it insists on the irreducibility of higher-level descriptions. He invokes Anderson’s broken symmetries and the concept of effective theories from physics: you don’t need to know what neurons are doing to study cognition. Levin is arguing for something stronger than emergence-as-effective-theory. He’s arguing that the patterns themselves have a kind of reality, that the Platonic space is structured and explorable, and that physical embodiments function as pointers or interfaces into that space.

Whether or not you buy this “metaphysics”, the research program it generates is concrete. Levin’s lab (and of course Bongard’s work as well) is already using biobots and bioelectric interventions to probe the space of possible forms in exactly the way the framework suggests.

The question worth sitting with is whether treating the Platonic space as real produces better science than treating it as a useful fiction. Levin’s own stated criterion, which I find compelling as a heuristic: he focuses on “forward-looking fecundity of research programs” over “philosophical precommitments such as physicalism or reductionism.”

The Research Program: Pointers, Interfaces, and the Adjacent Possible

The most concrete part of “Ingressing Minds” is the research agenda it outlines, which has two main thrusts.

The first is studying the “adjacent possible” around existing forms. Xenobots teach us about patterns adjacent to those of frog embryos; Anthrobots teach us about patterns adjacent to adult human tissues. By creating synthetic constructs that have no evolutionary history as such, researchers can study what patterns emerge from a given set of biological hardware when freed from the defaults.

The second is investigating minimal systems: simple sorting algorithms, chemical droplet robots, gene regulatory network models. In systems where every component is known, any unexpected competency (like the “delayed gratification” Levin’s group found in sorting algorithms) is a genuine signal about what the system can access, with no hidden mechanisms to appeal to (Zhang, Goldstein, & Levin, “Classical sorting algorithms as a model of morphogenesis,” Adaptive Behavior, 2024). Recent work on associative conditioning in gene regulatory networks extends this approach, showing that even simple regulatory architectures can acquire learned associations that increase their causal integration (Pigozzi, Goldstein, & Levin, “Associative Conditioning in Gene Regulatory Network Models Increases Integrative Causal Emergence,” OSF Preprints, 2024).

This second thrust has a resonance with Krakauer’s own research program. Krakauer has argued that computation “does not emerge from silicon, tungsten, insect excreta or other materials” but from “procedures of reason or logic” (Krakauer & Kempes, “Problem-Solving Matter,” Aeon, 2024). Both are arguing, in different registers, that the interesting properties of complex systems transcend their substrates. Krakauer frames this as computation being substrate-independent; Levin frames it as patterns ingressing through physical interfaces. The empirical predictions may be closer than the metaphysics would suggest.

The Selflet and What It Means for Continuity

One more term worth sitting with. A selflet, in Levin’s vocabulary, is a thin temporal slice of a cognitive being, measured in hundreds of milliseconds for a human. The concept emphasizes that cognitive agents are dynamic patterns that reconstruct themselves and their past from currently available memory at each moment. On this model, memories are messages from your past self, and actions constrain and enable your future selves by reshaping the landscape of available options.

Levin developed this idea at length in “Self-Improvising Memory” (Levin, “Self-Improvising Memory: A Perspective on Memories as Agential, Dynamically Reinterpreting Cognitive Glue,” Entropy, 2024), where he argues that memory’s function is to preserve salience rather than fidelity. The persistence of any cognitive system, from a cell to a society, depends on continuous creative reinterpretation of stored patterns. What looks like faithful recall is actually improvisation constrained by the architecture of the system and the structure of the information it carries.

This is a reframing with practical consequences. It dissolves familiar philosophical puzzles about personal identity into engineering questions about how patterns persist and propagate across temporal boundaries. It also connects, in ways I’m still thinking through, to questions about how organizations and research fields maintain continuity.

How does a collective intelligence (whether a body, a lab,10 or a field) sustain coherent goals across time when its components are constantly turning over? The selflet concept suggests that the answer has less to do with stable storage and more to do with the quality of the improvisational process at each moment of reconstruction.

Where This Leaves Us

Krakauer gives us the institutional and methodological frame: complexity science as the study of teleonomic matter, with its own history, its own theoretical commitments, and its own relationship to the physical sciences.11 Levin gives us a vocabulary for the empirical frontier, a set of terms designed to make previously invisible phenomena visible and experimentally accessible. Both are insisting that the tools we inherited from physics are insufficient for the systems we now need to understand.

The productive tension between the two is what I want to sit with across this series. Krakauer’s materialist complexity science provides discipline: it demands that claims about agency and purpose be cashed out in terms of effective theories and empirical predictions. Levin’s willingness to venture beyond physicalism provides ambition: it asks whether the framework can be expanded to accommodate phenomena that the standard account struggles with. Neither alone is sufficient, and I’m not yet convinced they fully reconcile.

The next post will work through the Carroll-Krakauer conversation in more detail, particularly Krakauer’s argument that complexity is not pre-paradigmatic and what that commitment costs. Subsequent pieces will trace where the two frameworks converge on information processing as the unit of analysis, where they diverge on whether substrate matters, and what an experimentalist working in the Levin tradition might actually borrow from the SFI vocabulary (and vice versa).

If Levin is right that the Platonic space is explorable, then complexity science will need new tools for characterizing what the exploration finds. If Krakauer is right that effective theories12 are all we need, then Levin’s vocabulary will have to earn its keep by generating predictions that materialist accounts can’t match. Either way, the work of the next decade in this space will be done by people who can hold both frames at once long enough to test them against each other.

Stay tuned here to Scholarly Notes section of my newsletter, and also to JOPRO website and Substack for additional updates and opportunities around investigations in complexity science.

Appendix: Levin’s Definitions

These are selected direct quotes from Michael Levin’s substack:

Agential material - the subject of engineering (by evolution or by human engineers or by cells or whatever) which is not passive and not even just active or computational, but has a significant degree of autonomy - an agenda, perhaps homeostatic capacity or higher, which it will execute independently, and which serves as the target of behavior-shaping interventions (not micromanagement) in optimal control. As an engineer, the degree to which your material is agential is, practically, the degree to which you need to account for, and can exploit, its autonomy; it’s the de gree to which engineering is really reverse-engineering, and the degree to which the result os a collaboration with the material. Living matter is a multi-scale agential material, with learning capacity and goal-seeking competencies at every scale, which has massive implications for evolution, bioengineering, and biomedicine. But even non-living, minimal constructs can exhibit some of this.

Axis of persuadability - a spectrum containing different kinds of systems (from mechanical clocks to humans and beyond) that organizes them with respect to what kind of interventions (rewiring, setpoint editing, training, logical arguments, etc.) are optimal for prediction and control of that system. The question is, what kind of approach is needed to persuade the system to do what you want it to do. It’s an engineering take on the question of agency, designed to bring deep philosophical questions into tight contact with experimental science and discovery. See Technological Approach to Mind Everywhere (TAME): an experimentally-grounded framework for understanding diverse bodies and minds.

Platonic space - a non-physical latent space of patterns (such as observed in mathematical objects) which, while not determined by evolutionary history or properties of the physical world, affects physical events and in particular is heavily exploited by evolution (i.e., physics is constrained by these patterns, while biology is what we call the study of systems that are enabled by them). “Platonic space” as a term is used not to necessarily hew close to the views of Plato, Pythagoras, and many others who thought about this issue, but to make a link to the project of Platonist Mathematicians who see themselves as not creating novel structures but discovering pre-existing patterns in an ordered space. I envision a broader latent space which contains not only the (seemingly) low-agency facts of mathematics but more complex, higher-agency patterns we recognize as behavioral competencies (a.k.a., kinds of minds). The causal influence of mathematical facts on the physical world suggests a kind of interactionism: that the mind:brain relationship is symmetrical to the relationship between mathematical objects and physical ones. I propose a research program using synthetic interfaces to those patterns (including biobots and hybrids that do not have a specific evolutionary history to explain their form and behavior) to work out the structure of the space and the mapping between the causal architecture and other properties of physical embodiments (cells, machines, embryos, etc.) and the patterns that ingress into the physical world through them. As a corollary to this view, we can define mathematics as the branch of cognitive science that studies the behavior of those Platonic space denizens whose behavior can be precisely and sharply defined, while computer science and biology study the behavioral properties of more agential, intelligent patterns as observed through specific kinds of dynamic and living interfaces respectively.

Selflet - a Selflet refers to a thin temporal slice of a cognitive being (in a human, it would be measured in hundreds of milliseconds) across time, as in the “space-time bread loaf” of Special Relativity. It emphasizes that cognitive agents like us are not static, perduring entities but a dynamic pattern that has to re-construct itself and its past from the currently-available memory engrams in its brain, body, and environment. Selflets are snapshots of a mind’s “now” moment, and some large number of Selflets integrate into what looks, to observers (and to itself), as an entire agent lasting through time. This model facilitates thinking about memories as messages from your past self, and actions as constraining and enabling your future selves by deforming the energy landscape of the options available to you in the future (via actions that change environmental features and your own structure and information content). This model thus exploits similarities between lateral interactions between agents and “vertical” interactions between a single agent’s past and future selves.

Teleophobia - the unwarranted fear of erring on the side of hypothesizing too much agency in explaining or predicting the behavior of a system, and the usage of conceptual tools and strategies that are best suited for the most mechanical side of the spectrum (coupled with insufficient concern about incorrectly attributing too little). This limiting perspective usually arises from a mistaken belief that agentic properties in a system can be adjudicated linguistically or philosophically, and from a failure to embrace the tools that have been available for decades to rigorously study and empirically discover tractable levels of competency in any given system.13

Having reviewed this episode again, it’s likely there will be a full breakdown ahead. It may be associated with the subsequent footnotes, as well.

Ordinary as in: “Non-symmetry breaking”, not encoding any information about the world around it; something without the pressure of selecting (or being ‘forced to choose’) from alternatives (although that is more my personal lens.)

Speculatively, this may be potentially discussed in the Krakauer + Carroll episode when Krakuer discusses certain biological agents are only so amenable to processing information. We will be covering this convergence (or lack there of) ahead.

The ethical implications of diverse intelligence are something we are looking to develop more at a project level at JOPRO; if you have particular interest in that, consider reaching out.

See also: “Living Things Are Not (20th Century) Machines: Updating Mechanism Metaphors in Light of the Modern Science of Machine Behavior" by Bongard and Levin, and this more recent post from 2025: “[…] and also they totally are.”

See also, “Darwin’s agential materials: evolutionary implications of multiscale competency in developmental biology”, by Michael Levin.

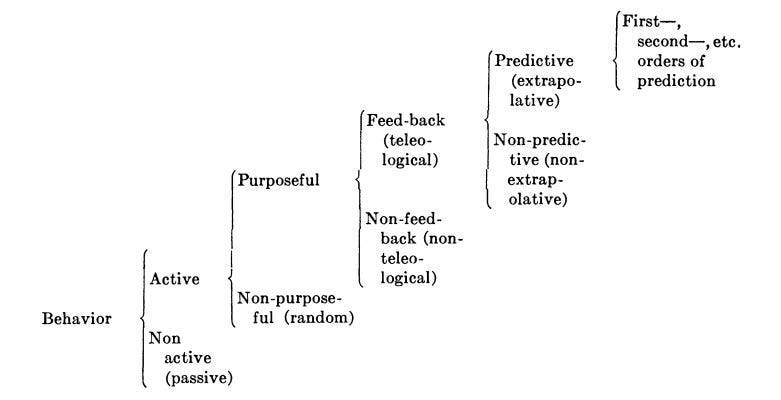

It’s worth considering this in the context of the 1943 paper that Levin often cites, particularly the behavioral category diagram. In the paper, Rosenblueth, Wiener, and Bigelow are particularly aware of the questionable reputation of teleology at the start of the 20th century; much of the paper was an attempt to salvage what merits of purpose (or having a goal) could offer, particularly in terms of negative feedback and distance to a desired state or destination — a precursor to both cybrernetics, complexity science, and what we now know as reinforcement learning in machine learning.

Krakauer’s Foundational Papers in Complexity Science (SFI Press, 2024), a four-volume collection spanning eighty-nine papers from 1922 to 2000, is itself an argument against teleophobia in a different register. By showing how complexity science developed its own paradigmatic commitments distinct from physics, the collection makes the case that higher-level descriptions are genuine theories in their own right, with explanatory power that reduction would dissolve.

Although I’m not quite sure yet, to be honest. If you have an informed opinion, would appreciate hearing it in a comment or email.

Catching “Twirly Birds” and maintaining conversations across time and ‘seasons’ of a lab are something we’ve discussed extensively at Orthogonal Research and Education Lab. I hope to write up more about this soon with its director, Dr. Bradly Alicea.

The Krakauer + Carroll episode is interesting in that when asked about if Complexity Science is pre-paradigmatic in the Khunian sense, Krakauer seems to lean on the side that it is not pre-praradigmatic, mentioning the “four legs” of complexity science that would make the table and perhaps its paradigms as well. Yet, towards the end of the conversation, Carroll raises the question again, with some uncertainty around which paradigms. It is perhaps salient to note that the podcast episode took place before the full publishing of the Foundational Papers in Complexity Science texts were released, or Krakauer’s more compact Complex World primer.

This point on effective theories, and more broadly why Krakauer is against certain greedier paradigms or approaches to complexity science, is interestingly made by Krakuer towards the 3/5 mark of the discussion with Carroll.

A final note to the Carroll-Krakauer discussion: towards the end, the two speculate about the rapid advances of the early- and mid-twentieth century, and how returning to them with modern insight and retracing the steps may offer much fruit. I am quite sympathetic to efforts to trace what happened and which paths were taken or not. One would hope that the proliferation of LLMs (and increased ability to synthesize broad swaths of older, perhaps obscure knowledge domains), this will happen intentionally over time. But, some of that intention is going into the JOPRO Futures Center itself, and other efforts from Orthogonal Research and Education Lab (such as the project around “Reimagining Cybernetics.”) We are always in search of organizations, programs, and people interested in working in that space.